🎧 Podcast version available Listen on Spotify →

Panic makes bad law.

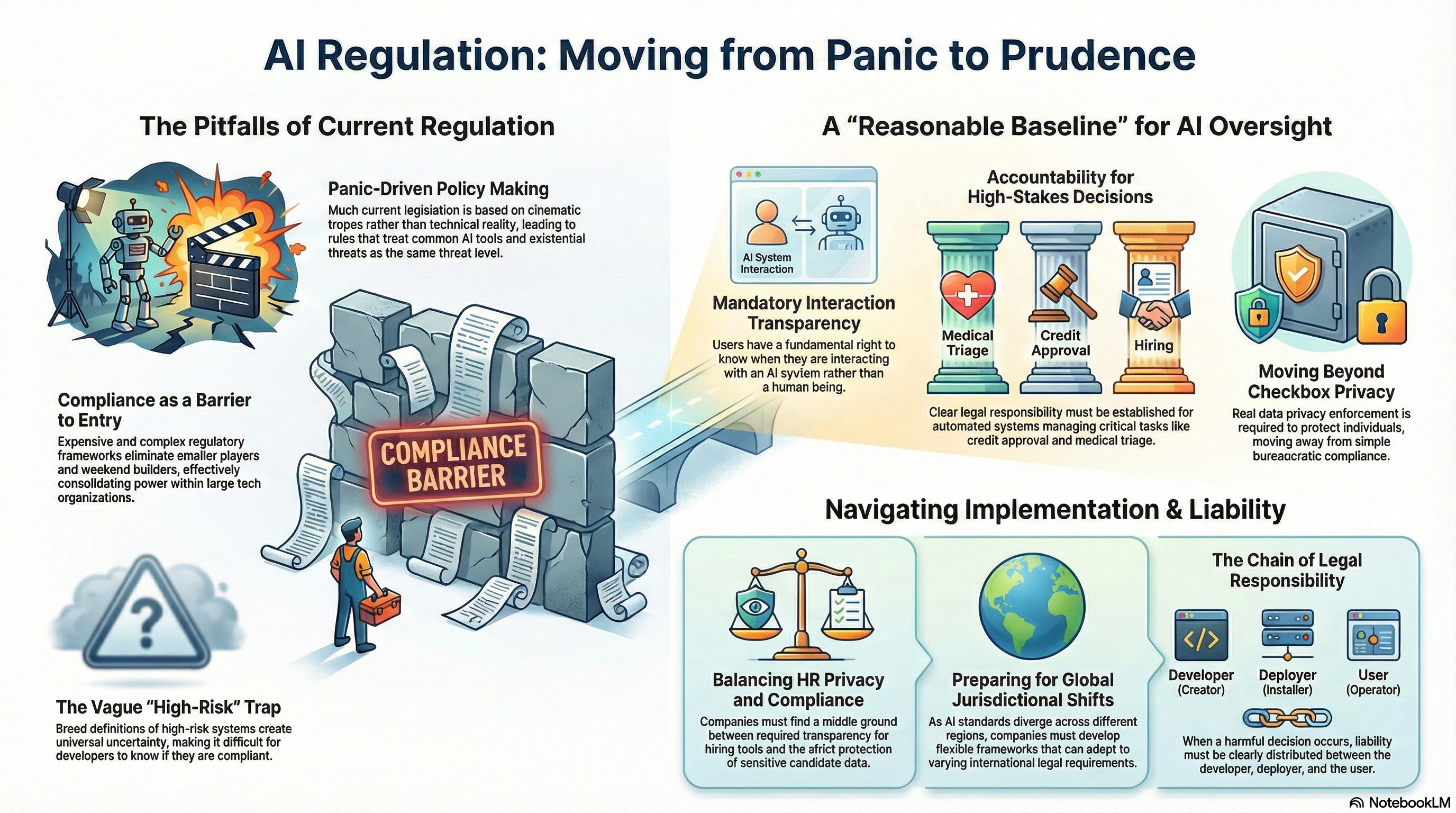

That’s what’s happening right now with AI regulation. Not thoughtful policy. Not measured oversight. Panic dressed up in legal language, written by people who have never trained a model, never built an agent, never used these tools for anything beyond a demo.

They’re regulating based on what they’ve seen in movies.

The Bill That Sounds Serious But Isn’t

Most AI legislation follows the same pattern. Vague language about “high-risk systems.” Requirements that are broad enough to mean everything and specific enough to mean nothing. Compliance frameworks that only large organizations can afford to navigate.

That last part is the tell.

When regulation is expensive to comply with, it doesn’t stop the powerful players. It eliminates the smaller ones. You end up concentrating power in exactly the hands people claim to be worried about.

That’s not safety. That’s consolidation with better PR.

What Over-Regulation Actually Does

The research happens anyway. The models get built anyway.

Regulation doesn’t stop the technology. It decides who gets to build it. Right now, the answer is trending toward: the ones with legal teams big enough to manage the paperwork.

Weekend builders. Independent researchers. Small teams in countries outside the usual tech centers. They get squeezed out. Not because they’re dangerous — because compliance is expensive and nobody’s funding their legal department.

What Actually Makes Sense

Transparency requirements. You should know when you’re talking to an AI.

Accountability for high-stakes automated decisions — hiring, credit, medical triage. Clear lines of responsibility when a system gets it wrong.

Data privacy. Real enforcement, not checkbox compliance.

That’s it. That’s a reasonable baseline.

What doesn’t make sense: regulating capabilities nobody fully understands yet. Treating every AI application like a weapon. Writing rules so broad they create uncertainty for everyone and clarity for no one.

The Honest Problem

The people who understand this technology aren’t loud enough in these conversations.

Not to protect their interests. To prevent policy that treats ChatGPT and Skynet as the same threat level.

They’re not. And the difference matters more than most regulators currently understand.

The technology isn’t waiting for the legislation to catch up.

Neither should the people who build it.